pacman::p_load(dplyr,

tidyr,

broom,

tinyplot,

ggplot2)Chapter 4

Overview

Goals

We’re going to look at testing null hypotheses.

Set up

Load packages and set theme.

tinytheme("ipsum",

family = "Roboto Condensed",

palette.qualitative = "Tableau 10",

palette.sequential = "agSunset")theme_set(

theme_minimal(base_family = "Roboto Condensed") +

theme(panel.grid.minor = element_blank())

)

tableau10 <- c("#5778a4", "#e49444", "#d1615d", "#85b6b2", "#6a9f58",

"#e7ca60", "#a87c9f", "#f1a2a9", "#967662", "#b8b0ac")

options(

ggplot2.discrete.colour = \() scale_color_manual(values = tableau10),

ggplot2.discrete.fill = \() scale_fill_manual(values = tableau10)

)Load saved GSS data.

gss2024 <- readRDS(file = here::here("data", "gss2024.rds")) |>

haven::zap_labels()Null hypothesis

Setting up the null model

Null hypothesis: the average American adult in 2024 watches 3 hours of TV per day. By subtracting 3 from the observed value, we can make it so the null hypothesis is 0. That makes it easy to use lm(y ~ 0) to set up the null model.

d <- gss2024 |>

select(tvhours) |>

drop_na() |>

mutate(tvdev = tvhours - 3)

m0 <- lm(tvdev ~ 0, data = d)

m1 <- lm(tvdev ~ 1, data = d)

sse0 <- deviance(m0)

sse1 <- deviance(m1)

observed_pre <- (sse0 - sse1) / sse0

observed_pre[1] 0.008343581The SSE is improved by estimating \(\beta_0\), but was it improved more than we’d expect by chance?

Creating a null distribution

Here’s a quick example where we make data where the null hypothesis is TRUE. Then we can use it to check whether we could get numbers that big by chance.

fake_data <- tibble(tvhours = rnorm(2152, mean = 3, sd = 3.3))

fake_data <- fake_data |>

mutate(tvdev = tvhours - 3)

m0 <- lm(tvdev ~ 0, data = fake_data)

m1 <- lm(tvdev ~ 1, data = fake_data)

sse0 <- deviance(m0)

sse1 <- deviance(m1)

(sse0 - sse1) / sse0[1] 0.0002572073Convert this idea to a function so we can do this many times. The basic idea is to simulate data where the null is true, then calculate the PRE (which won’t be exactly zero because of sampling variability).1

1 We could create a better null distribution here by using a different distribution than the normal for our fake tvhours data. As you saw earlier the data itself is actually NOT normally distributed. We could use a count distribution but we’re not ready for that! We’ll talk about that more later.

calc_null_pre <- function() {

null_data <- tibble(tvdev = rnorm(2152, 0, 3.3))

m0 <- lm(tvdev ~ 0, data = null_data)

m1 <- lm(tvdev ~ 1, data = null_data)

pre <- (deviance(m0) - deviance(m1)) / deviance(m0)

return(pre) # this returns the observed PRE from that simulation

}Create a skeleton and append 1000 null PRE simulations.

null_sims <- tibble(sim_number = 1:1000) |>

rowwise() |>

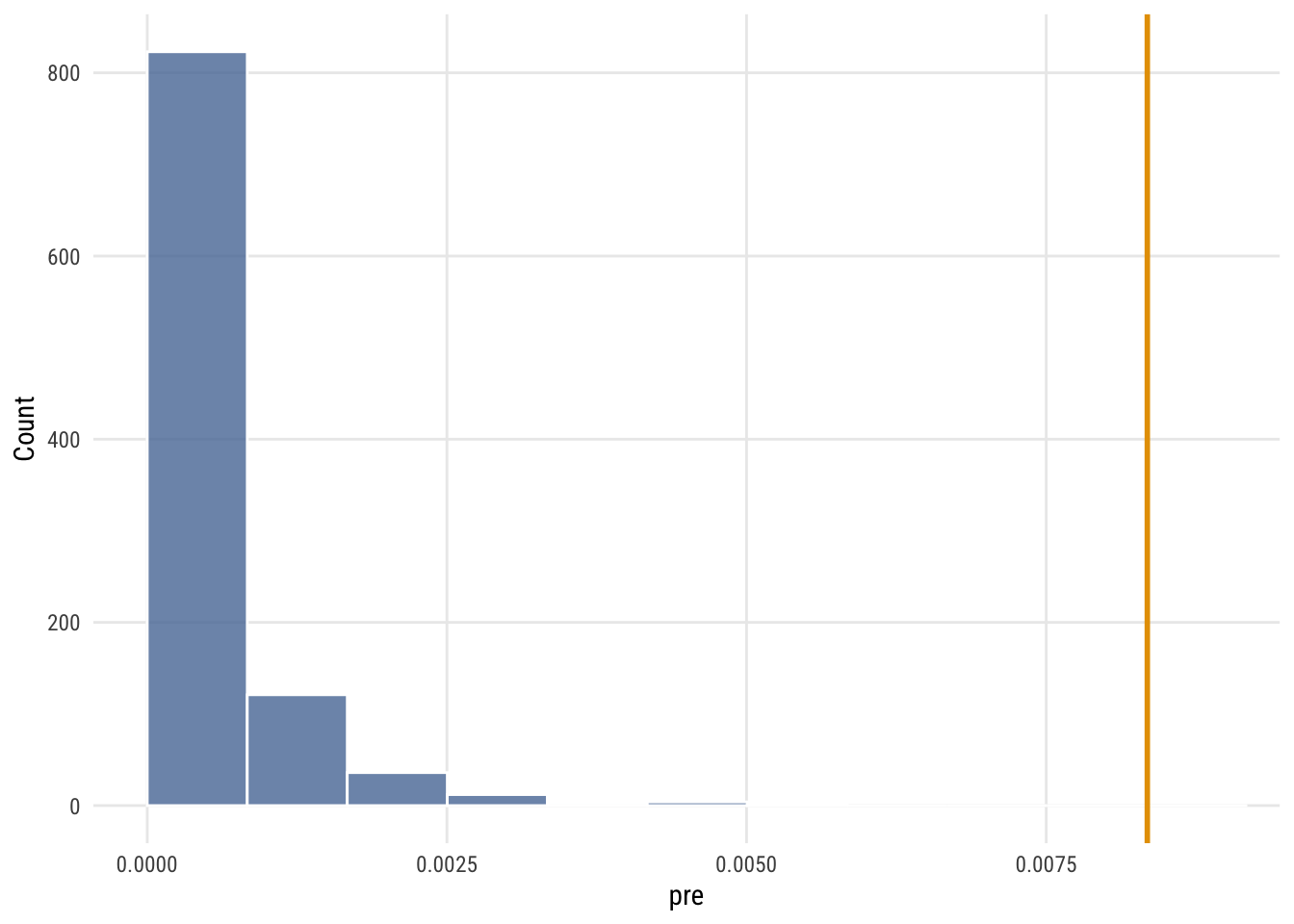

mutate(pre = calc_null_pre())The test

The idea is to compare the real world to the world implied by the null hypothesis. So we’ll show how the OBSERVED PRE compares to the distribution of NULL PREs.

Show code

plt(~ pre,

data = null_sims,

type = type_hist(breaks = "Sturges"),

xlim = c(0, .009))

plt_add(type = type_vline(v = observed_pre),

col = "#E69F00",

lwd = 2)

Show code

ggplot(null_sims, aes(x = pre)) +

geom_histogram(bins = nclass.Sturges(null_sims$pre),

boundary = 0,

fill = tableau10[1], color = "white", alpha = 0.8) +

geom_vline(xintercept = observed_pre, color = "#E69F00", linewidth = 1) +

coord_cartesian(xlim = c(0, .009)) +

labs(x = "pre", y = "Count")

Based on the formula in the book, what F would that be equivalent to?

fstat <- (observed_pre / 1) / ((1 - observed_pre) / (nrow(d) - 1))

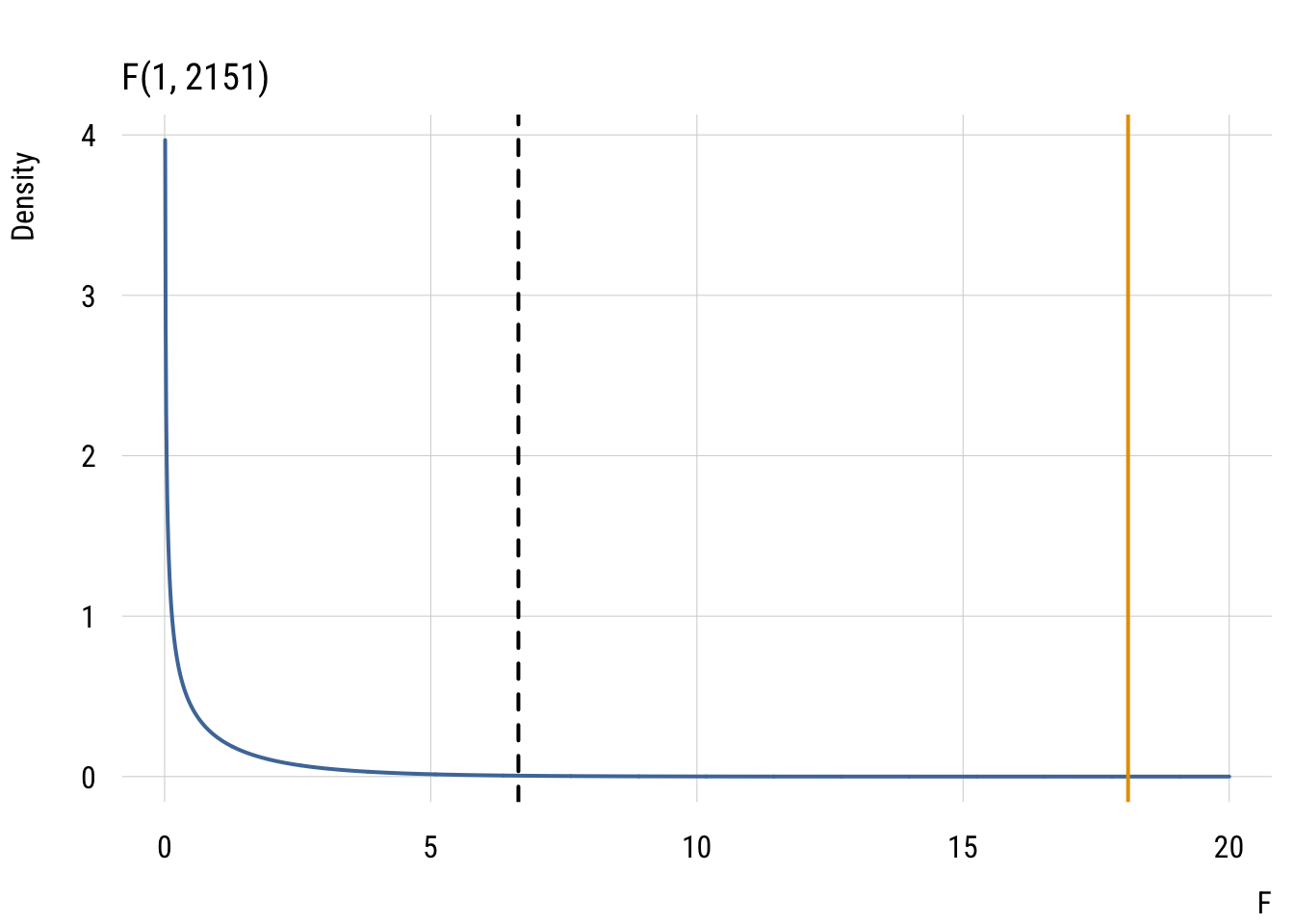

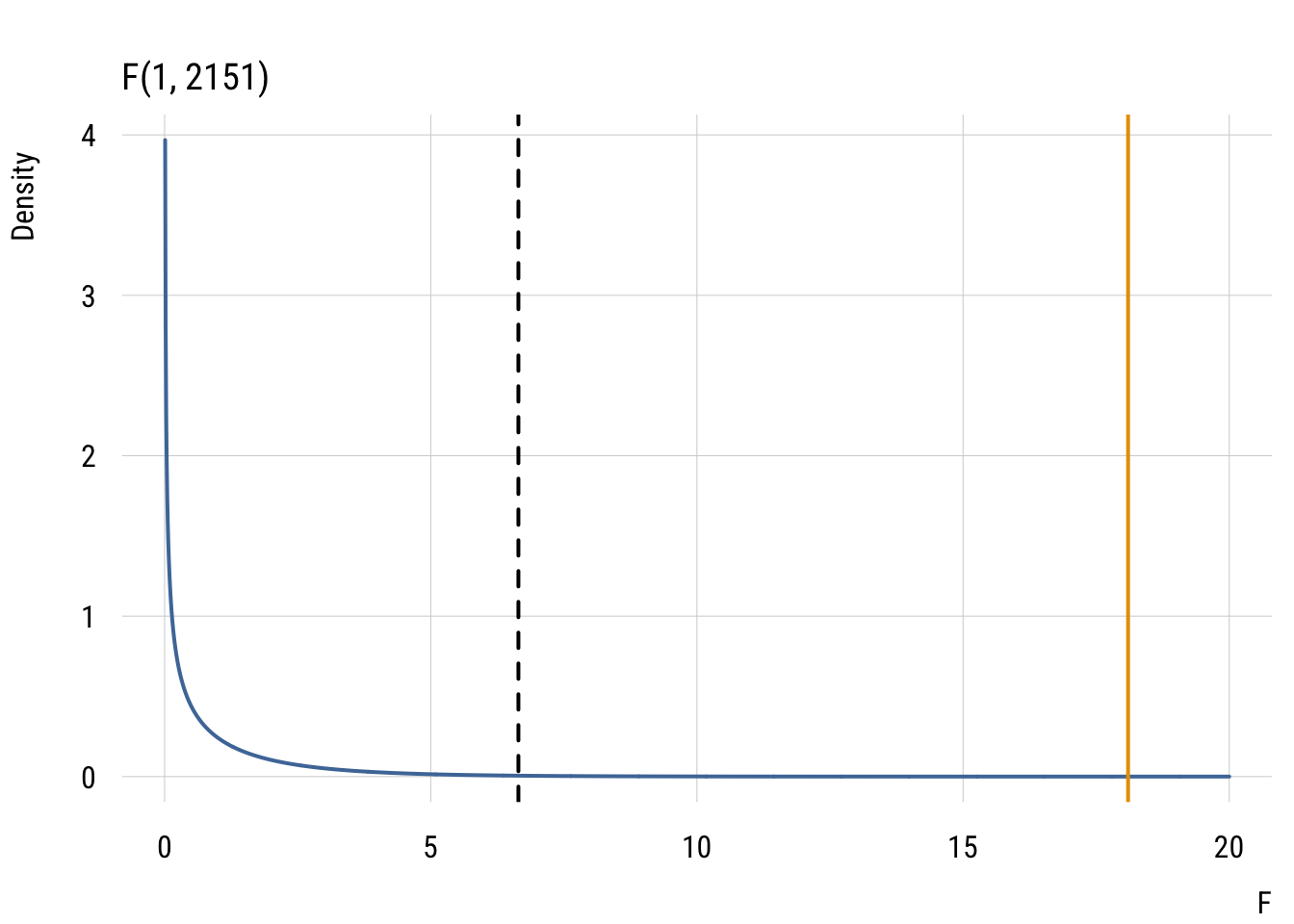

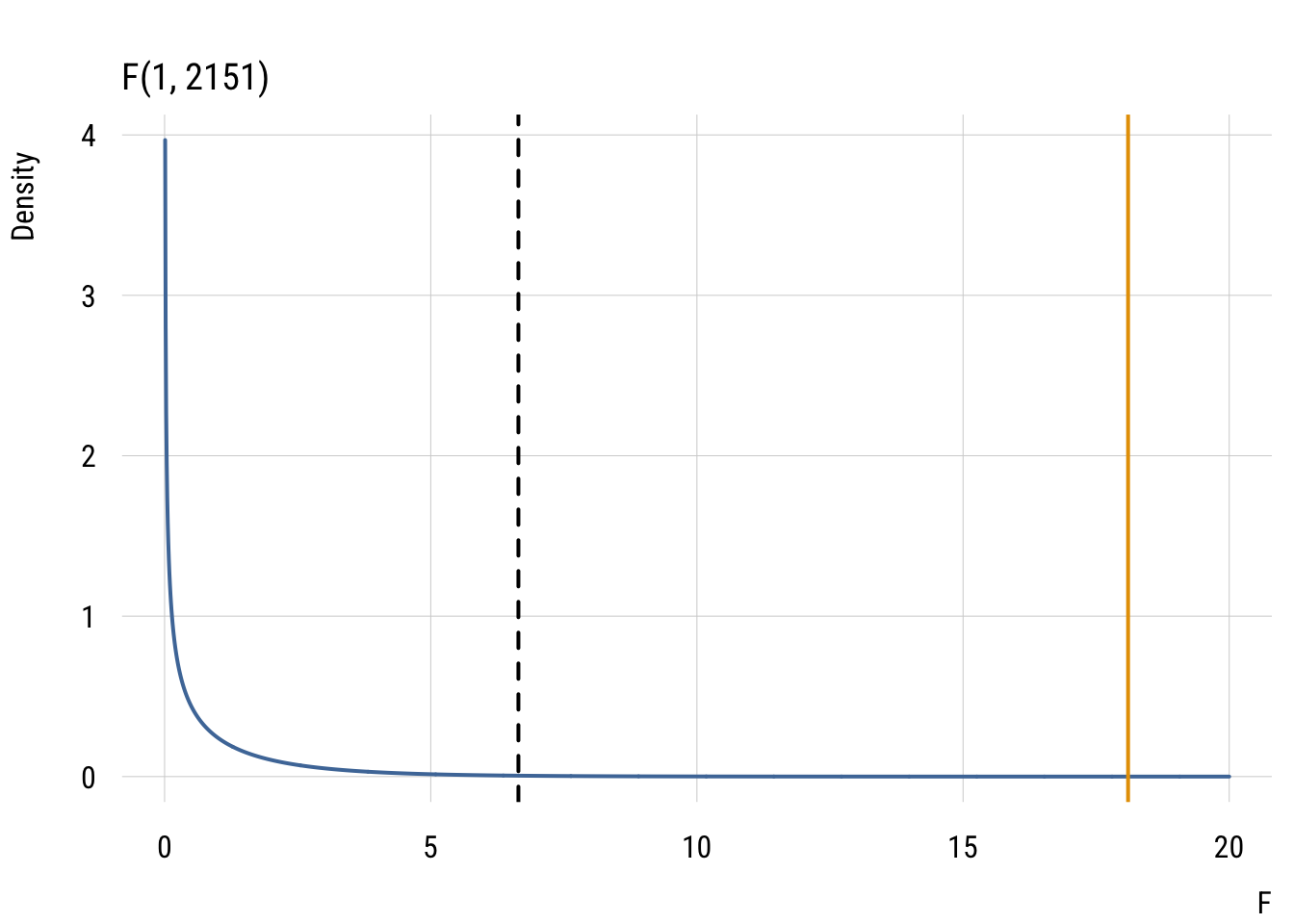

fstat[1] 18.09805F-distribution with numerator df = 1 and denominator df = 2151. The dotted line is the critical value and the solid line is the observed value (wayyyy above that).

Data prep

df1 <- 1

df2 <- 2151

alpha <- .01

f_obs <- 18.1

f_crit <- qf(1 - alpha, df1, df2)

x_max <- 20

# grid for the curve

x <- seq(0, x_max, length.out = 2000)

y <- df(x, df1 = df1, df2 = df2)Show code

plt(y ~ x,

type = "l",

main = "F(1, 2151)",

xlab = "F",

ylab = "Density",

xlim = c(0, x_max),

lwd = 2)

abline(v = f_crit, lty = 2, lwd = 2)

abline(v = f_obs, col = "#E69F00", lwd = 2)

Show code

ggplot(data.frame(x = x, y = y), aes(x = x, y = y)) +

geom_line(linewidth = 1, color = tableau10[1]) +

geom_vline(xintercept = f_crit, linetype = "dashed", linewidth = 1) +

geom_vline(xintercept = f_obs, color = "#E69F00", linewidth = 1) +

coord_cartesian(xlim = c(0, x_max)) +

labs(title = "F(1, 2151)", x = "F", y = "Density")

You can get F* (the critical value) from the book. Or we can do it using functions in R (as we did above). Remember that there is nothing sacred about 95% or 99% or any of that.

qf(.99, 1, 2151)[1] 6.646687You can also get the p-value from functions rather than from the book.

1 - pf(fstat, 1, 2151)[1] 2.188091e-05In any case, we will be rejecting the null hypothesis here!