pacman::p_load(dplyr,

tidyr,

stringr,

tinyplot,

ggplot2)Information criteria*

Goals

As we’ve seen so far, one of the problems in choosing a model is that more complicated models will always get at least a little closer to the data. So we can’t simply choose the model with the lowest RSS or deviance. This is the core problem of model selection

All of the approaches we’ve seen so far (i.e., \(t\)-test, \(F\)-test, likelihood ratio test) control the Type I error rate, or probability of a false positive. That is, they limit the probability we claim something is true when it actually isn’t. This is the meaning of setting \(\alpha\) to, say, .05.

In this section, we’re going to look at approaches to model selection that are not based on null hypothesis significance testing.

Set up

Let’s load the packages we’ll need for this.

tinytheme("ipsum",

family = "Roboto Condensed",

palette.qualitative = "Tableau 10",

palette.sequential = "agSunset")theme_set(

theme_minimal(base_family = "Roboto Condensed") +

theme(panel.grid.minor = element_blank())

)

tableau10 <- c("#5778a4", "#e49444", "#d1615d", "#85b6b2", "#6a9f58",

"#e7ca60", "#a87c9f", "#f1a2a9", "#967662", "#b8b0ac")

options(

ggplot2.discrete.colour = \() scale_color_manual(values = tableau10),

ggplot2.discrete.fill = \() scale_fill_manual(values = tableau10)

)We won’t use the states data until later but let’s make it now.

state.x77_with_names <- as_tibble(state.x77,

rownames = "state")

states <- bind_cols(state.x77_with_names,

region = state.division) |> # both are in base R

janitor::clean_names() |> # lower case and underscore

mutate(south = as.integer( # make a south dummy

str_detect(as.character(region),

"South")))AIC

The AIC or Akaike’s Information Criterion is not a null hypothesis test in the way that, say, the likelihood ratio test is. It’s rather a way of evaluating which of two models would do a better job predicting a different dataset that was created by the same data-generating process.

Definition

The formula is:

\[\text{AIC} = D + 2k\]

where \(D\) is the deviance and \(k\) is the number of estimated parameters (e.g., 3 for a simple regression).

The AIC decision rule is that we want to, when comparing two models, take the model with the lower AIC. In practice, it’s really that simple. But let’s talk about why.

The logic is that we want \(D\) to go down, but not at the expense of adding a bunch of junk parameters. So there’s a penalty for adding additional betas. Adding a new parameter needs to make the deviance go down by at least 2 for the model to be considered better.

Motivation: out-of-sample prediction

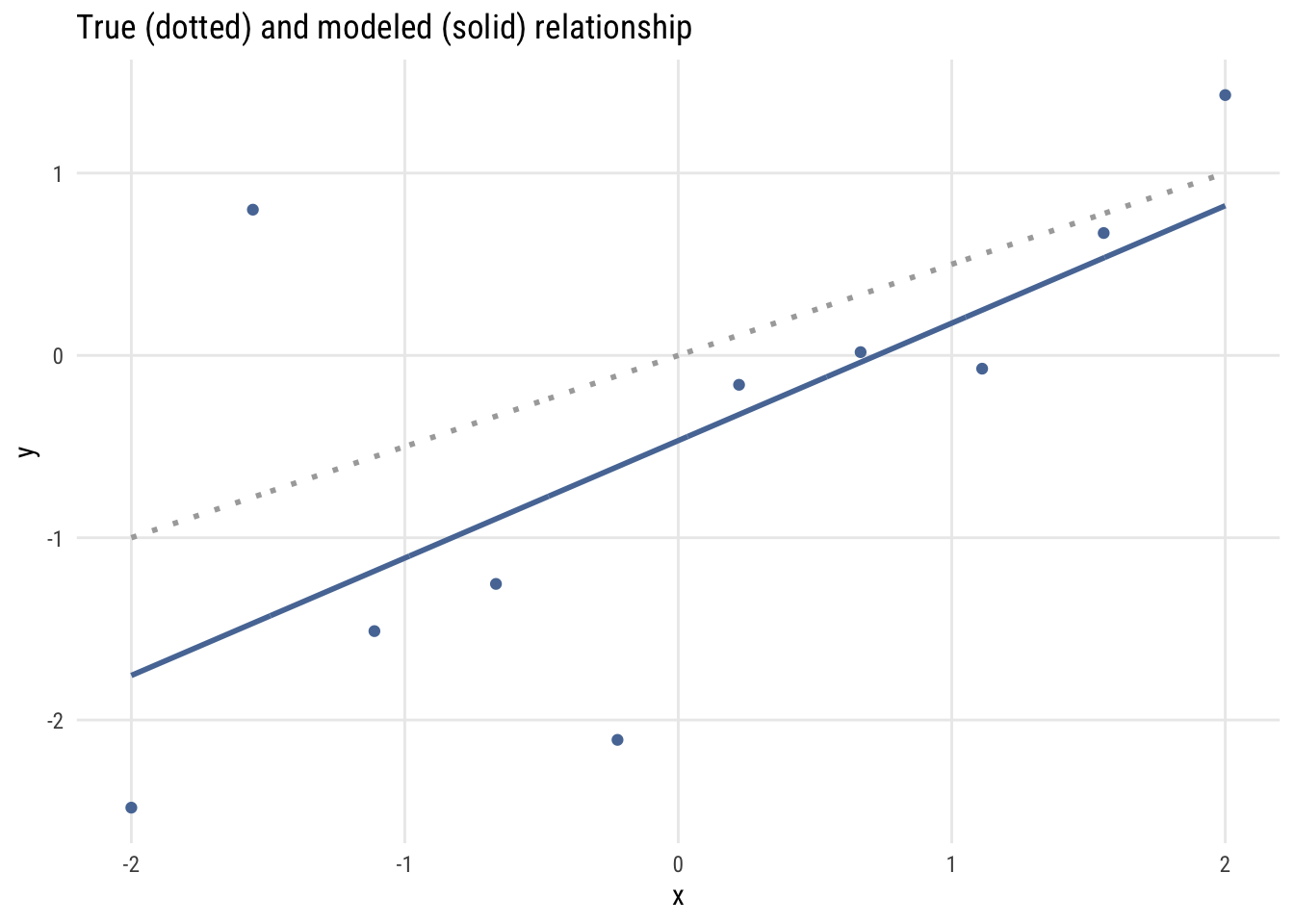

Consider the following simulated data, which is based on this model:

\[\begin{align} Y_i &\sim \mathcal{N}(\mu_i, 1) \\ \mu_i &= 0 + .5X_i \end{align}\]

Now let’s look at a finite sample from this process.

Data prep

set.seed(12)

x <- seq(from = -2, to = 2, length = 10)

y <- 0 + .5*x + rnorm(n = 10, mean = 0, sd = 1)

fit <- lm(y ~ x)

newdata <- data.frame(x = seq(-2, 2, length = 1000))

newdata$true <- newdata$x * .5

newdata$y <- predict(fit, newdata = newdata)Show code

plt(y ~ x,

type = "p",

main = "True (dotted) and modeled (solid) relationship")

plt_add(true ~ x, data = newdata, type = "l", lw = 1, lty = 3, color = "darkgray")

plt_add(y ~ x, data = newdata, type = "l", lw = 1.5, col = palette("Tableau 10")[1])

Show code

ggplot() +

geom_point(data = data.frame(x = x, y = y), aes(x = x, y = y),

color = tableau10[1]) +

geom_line(data = newdata, aes(x = x, y = true),

linetype = "dotted", color = "darkgray", linewidth = 1) +

geom_line(data = newdata, aes(x = x, y = y),

color = tableau10[1], linewidth = 1) +

labs(title = "True (dotted) and modeled (solid) relationship",

x = "x", y = "y")

I have drawn the data and the fitted regression line, which, of course, isn’t exactly the same as the actual data generating process. The estimated regression line consistently underestimates the value of \(Y_i\) that would come from the true model.

We want to do better if we can, so maybe we should make the model more complicated? Here’s us trying to use more and more complex models (i.e., higher degree polynomials) to improve the fit.

Data prep

newdata$y2 <- predict(lm(y ~ poly(x, 2)), newdata = newdata)

newdata$y3 <- predict(lm(y ~ poly(x, 3)), newdata = newdata)

newdata$y4 <- predict(lm(y ~ poly(x, 4)), newdata = newdata)

newdata$y5 <- predict(lm(y ~ poly(x, 5)), newdata = newdata)Show code

plt(y ~ x, type = "p", main = "Modeling Y with polynomials")

plt_add(true ~ x, data = newdata, type = "l", lw = 1, lty = 3, color = "darkgray")

plt_add(y ~ x, data = newdata, type = "l", lw = 1.5, col = palette("Tableau 10")[1])

plt_add(y2 ~ x, data = newdata, type = "l", lw = 1.5, col = palette("Tableau 10")[3])

plt_add(y3 ~ x, data = newdata, type = "l", lw = 1.5, col = palette("Tableau 10")[4])

plt_add(y4 ~ x, data = newdata, type = "l", lw = 1.5, col = palette("Tableau 10")[5])

plt_add(y5 ~ x, data = newdata, type = "l", lw = 1.5, col = palette("Tableau 10")[6])

Show code

newdata_long <- tidyr::pivot_longer(newdata,

cols = c(y, y2, y3, y4, y5),

names_to = "degree", values_to = "pred")

ggplot() +

geom_point(data = data.frame(x = x, y = y), aes(x = x, y = y)) +

geom_line(data = newdata, aes(x = x, y = true),

linetype = "dotted", color = "darkgray", linewidth = 1) +

geom_line(data = newdata_long, aes(x = x, y = pred, color = degree),

linewidth = 1) +

scale_color_manual(

values = setNames(tableau10[c(1,3,4,5,6)], c("y","y2","y3","y4","y5")),

labels = c("y" = "degree 1", "y2" = "degree 2", "y3" = "degree 3",

"y4" = "degree 4", "y5" = "degree 5")) +

labs(title = "Modeling Y with polynomials",

x = "x", y = "y", color = "Model") +

theme(legend.position = "none")

The polynomials end up “chasing” weird data points in ways that actually make our true understanding worse. This is called overfitting and it’s the main reason we don’t want to make models too complicated.

Why 2 times k?

The number “2” in the AIC formula isn’t arbitrary. It’s because adding extra parameters is expected to make the deviance worse out of sample at precisely the rate of two per “extra” parameter.

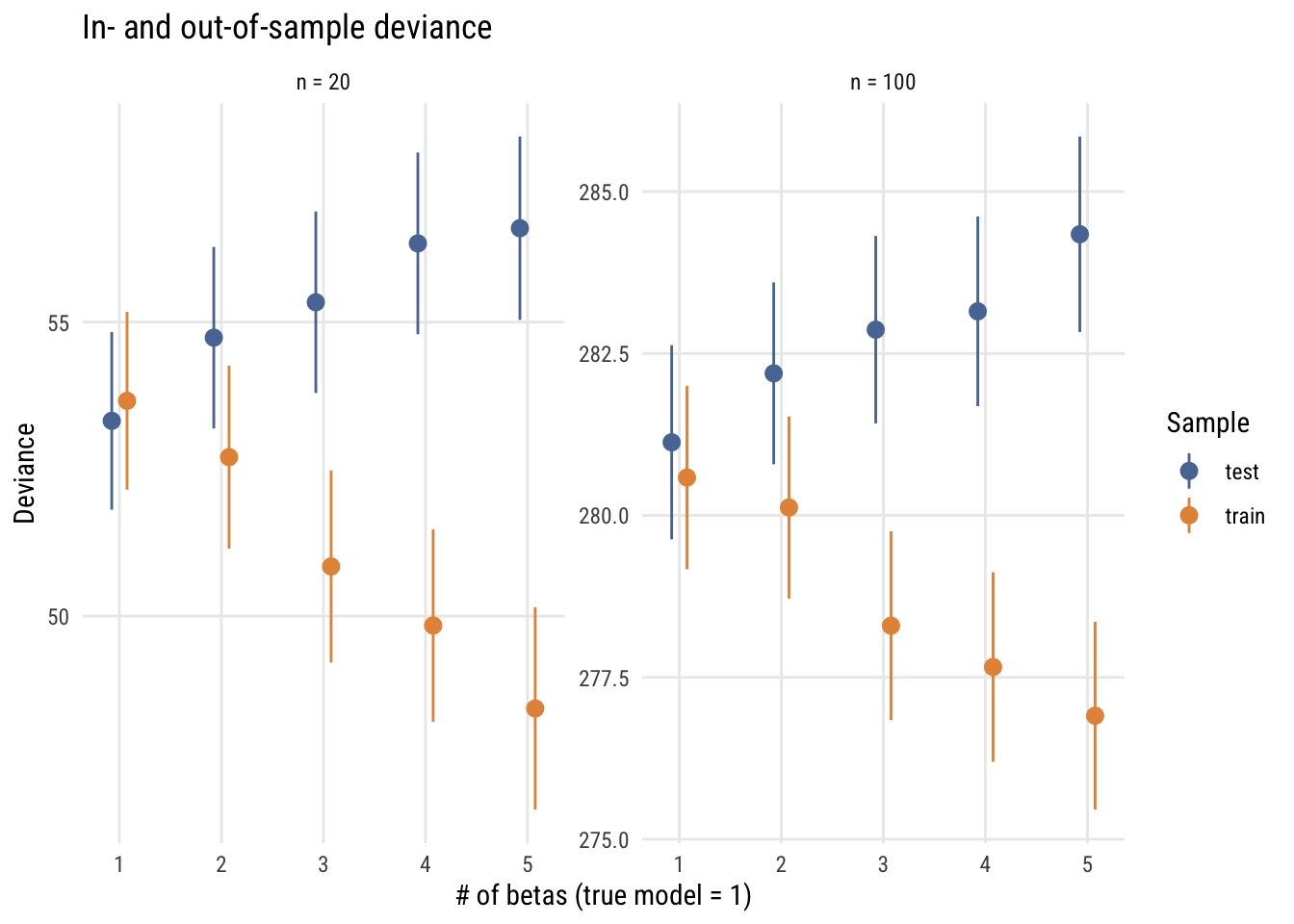

Here’s a simulation based loosely on the one in Richard McElreath’s Statistical Rethinking. The steps are:

Draw a sample of size N from a known process (the same as the model above); call this the training set.

Estimate a series of regression models on the training set, ranging from a linear model to a 5th degree polynomial.

Assess how well these models predict another data set of the same N drawn from the same process.

Here are the results for two situations, N = 20 and N = 100 (not that the N really matters here).

Data prep

# function to compare deviances

get_tt_devs <- function(n, b0, b1, s, k) {

x <- seq(-2, 2, length = n)

y <- b0 + b1*x + rnorm(n, 0, s)

fit <- lm(y ~ poly(x, k))

ll_train <- as.numeric(-2 * logLik(fit))

test_y <- b0 + b1*x + rnorm(n, 0, s)

pred_y <- predict(fit)

test_fit <- lm(test_y ~ pred_y)

ll_test <- as.numeric(-2 * logLik(test_fit))

tibble::tibble(train = ll_train, test = ll_test)

}

set.seed(1234)

mysims <- expand_grid(simnum = 1:1000, k = 1:5, n = c(20, 100)) |>

rowwise() |>

mutate(outs = list(get_tt_devs(n = n, b0 = 0, b1 = .5, s = 1, k = k))) |>

unnest_wider(outs) |>

group_by(k, n) |>

summarize(

across(c(train, test), list(mean = mean, sd = sd), .names = "{.col}_{.fn}"),

.groups = "drop") |>

pivot_longer(cols = -c(k, n), names_to = c("sample", ".value"), names_sep = "_") |>

mutate(ymax = mean + sd/sqrt(n),

ymin = mean - sd/sqrt(n),

nfac = ordered(n, labels = c("n = 20", "n = 100")))Show code

plt(mean ~ k | sample,

data = mysims,

ymin = ymin,

ymax = ymax,

type = type_pointrange(dodge = .01),

main = "In- and out-of-sample deviance",

ylab = "Deviance",

xlab = "# of betas (true model = 1)",

facet = nfac,

facet.args = list(free = TRUE))

Show code

ggplot(mysims, aes(x = k, y = mean, color = sample,

ymin = ymin, ymax = ymax)) +

geom_pointrange(position = position_dodge(0.3)) +

facet_wrap(~nfac, scales = "free_y") +

labs(

title = "In- and out-of-sample deviance",

y = "Deviance",

x = "# of betas (true model = 1)",

color = "Sample")

This graph shows how this works for the two sample sizes. As you can see, increasingly complex models do an increasingly good job of fitting the training (in-sample) data but do an increasingly bad job of fitting the test (out-of-sample) data. In fact, the deviance difference is 2 per excess parameter. This is why the AIC’s penalty is \(2k\).

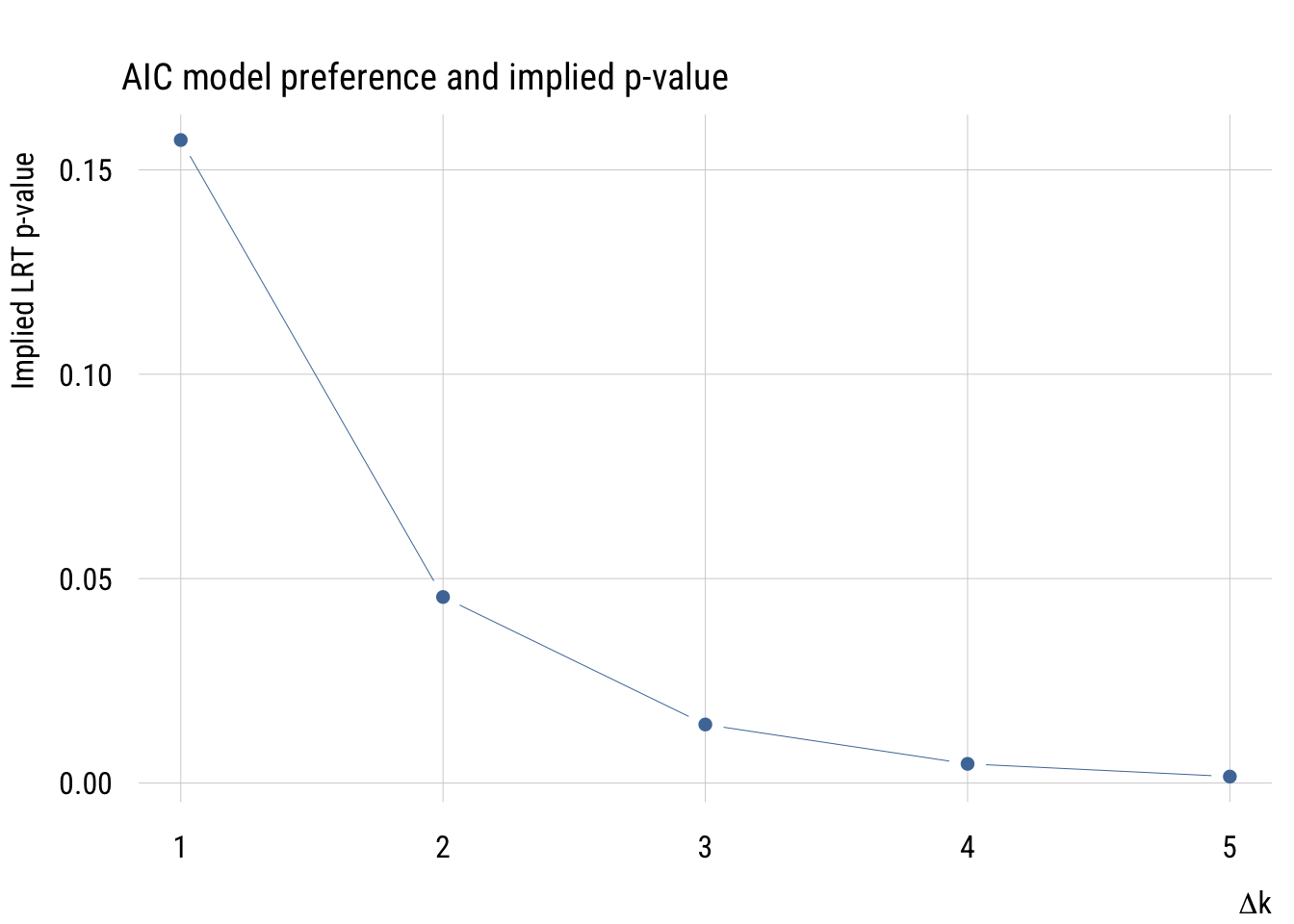

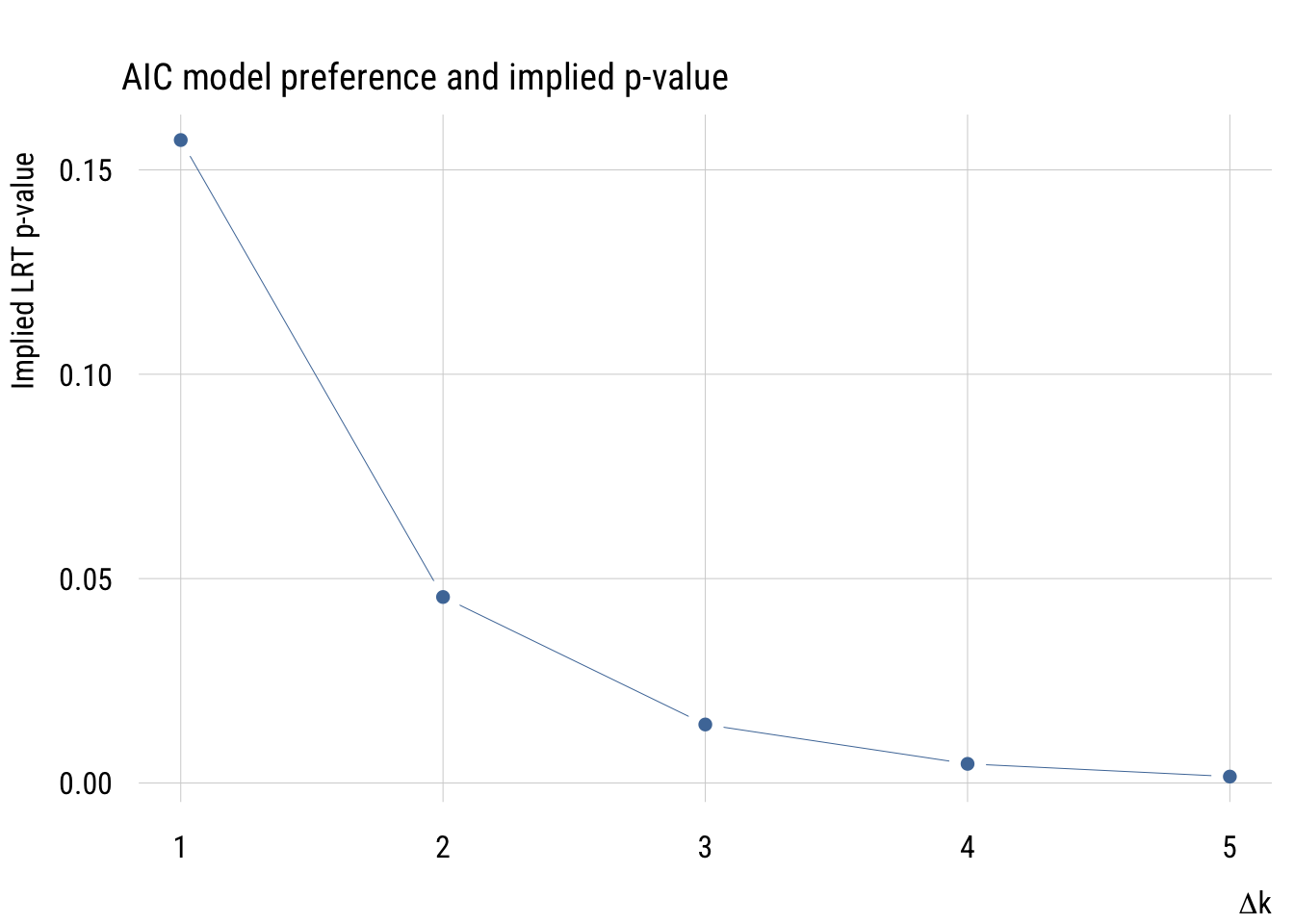

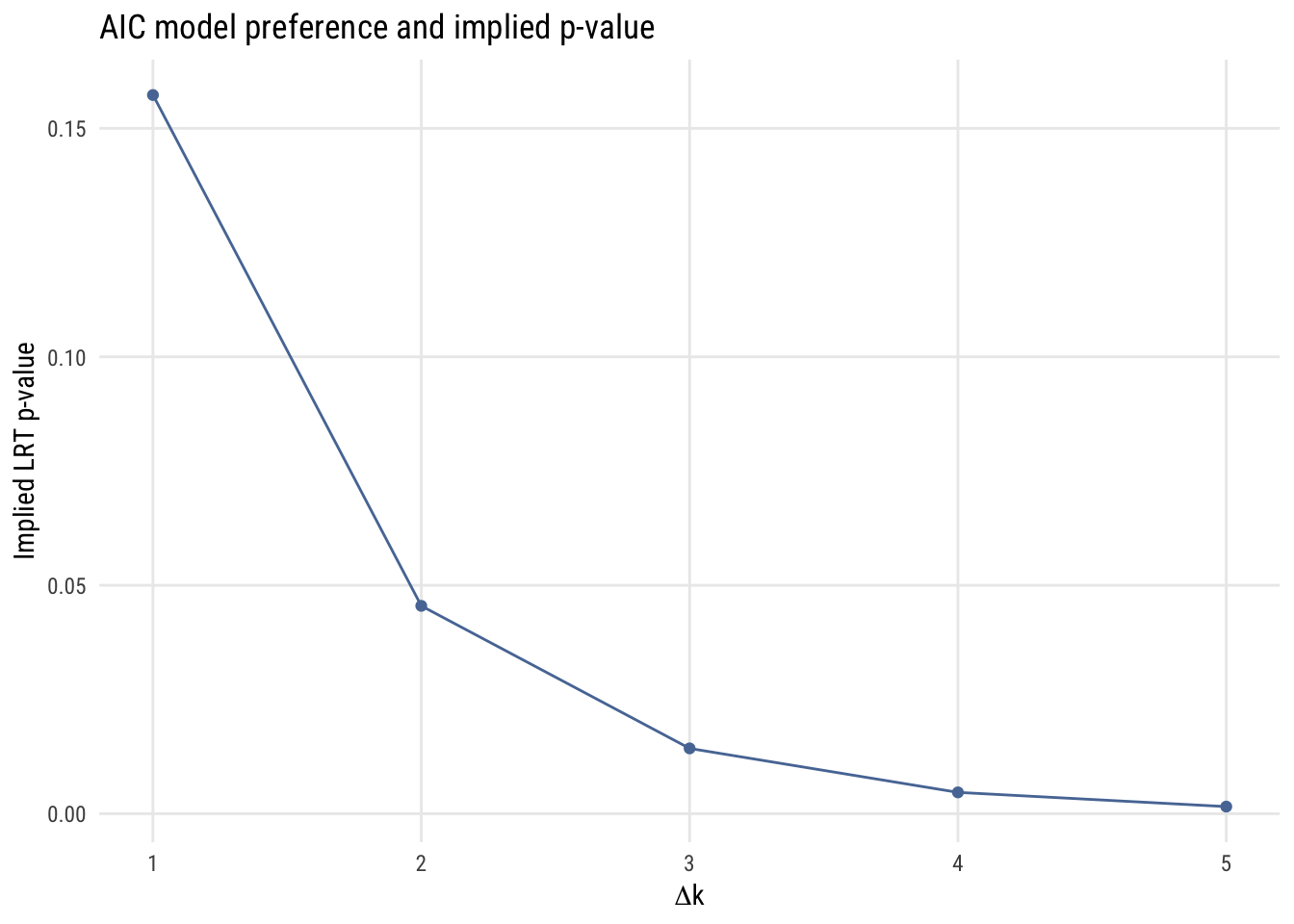

Implied p-value

If we’re considering two models, one more complicated and one less complicated, we can think about the implied p-value of the comparison. That is, we can ask what the p-value would be to make the same decision of rejecting the simpler model.

Data prep

aic_to_p <- tibble(

delta_k = 1:5,

p = 2 * (1 - pnorm(sqrt(2 * delta_k)))

)Show code

plt(p ~ delta_k,

data = aic_to_p,

type = "b",

main = "AIC model preference and implied p-value",

ylab = "Implied LRT p-value",

xlab = expression(Delta * "k"))

Show code

ggplot(aic_to_p, aes(x = delta_k, y = p)) +

geom_line(color = tableau10[1]) +

geom_point(color = tableau10[1]) +

labs(

title = "AIC model preference and implied p-value",

y = "Implied LRT p-value",

x = expression(Delta * "k"))

As we see here, the implied p-value for a one-parameter comparison is much more “liberal” than any p-value that one would choose.

Example use

Let’s do a very simple model comparison using AIC.

m0 <- lm(life_exp ~ 1, data = states)

m1 <- lm(life_exp ~ 1 + hs_grad, data = states)

AIC(m0, m1) df AIC

m0 2 174.3291

m1 3 155.6309As you can see, AIC prefers the model that conditions our expectations of life_exp on hs_grad.

BIC

The BIC or Bayesian Information Criterion is like the AIC, but different. In practice, it’s the same as the AIC except with a larger penalty term. It also gets used in the same basic way: take the model with the lower BIC.

Definition

Here is the formula for the BIC:

\[\text{BIC} = D + k\log n \]

Whereas the AIC always has a penalty term of two times the number of parameters, the BIC has a penalty term that’s the natural log of the sample size times the number of parameters. So for a regression on a model with N = 300, adding an additional parameter would have to decrease the deviance by more than 5.7 for that to be considered an improvement.

Motivation: identifying the true model

Whereas the AIC’s motivation is to approximate out-of-sample prediction, the BIC’s goal is to figure out which of the models that we’re considering is most likely to be the “true model” that generated the data1. (Of course that assumes that the true model is in the set that we’re considering, which is usually unlikely.)

1 I’m not going to do full justice to the BIC here. If you want to know more, see Adrian Raftery’s classic paper “Bayesian Model Selection in Social Research”. It is worth taking the time to read.

Why k log n?

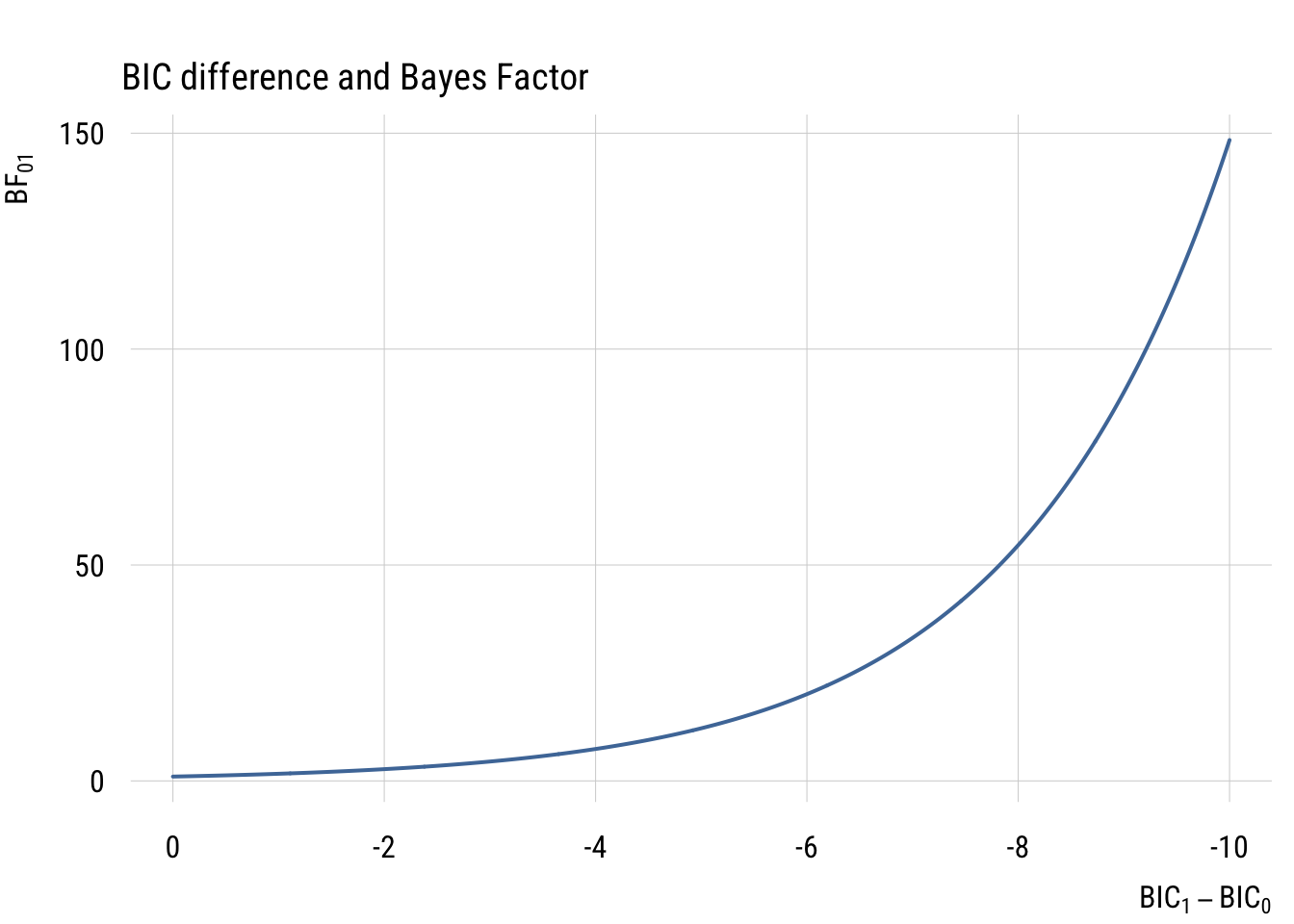

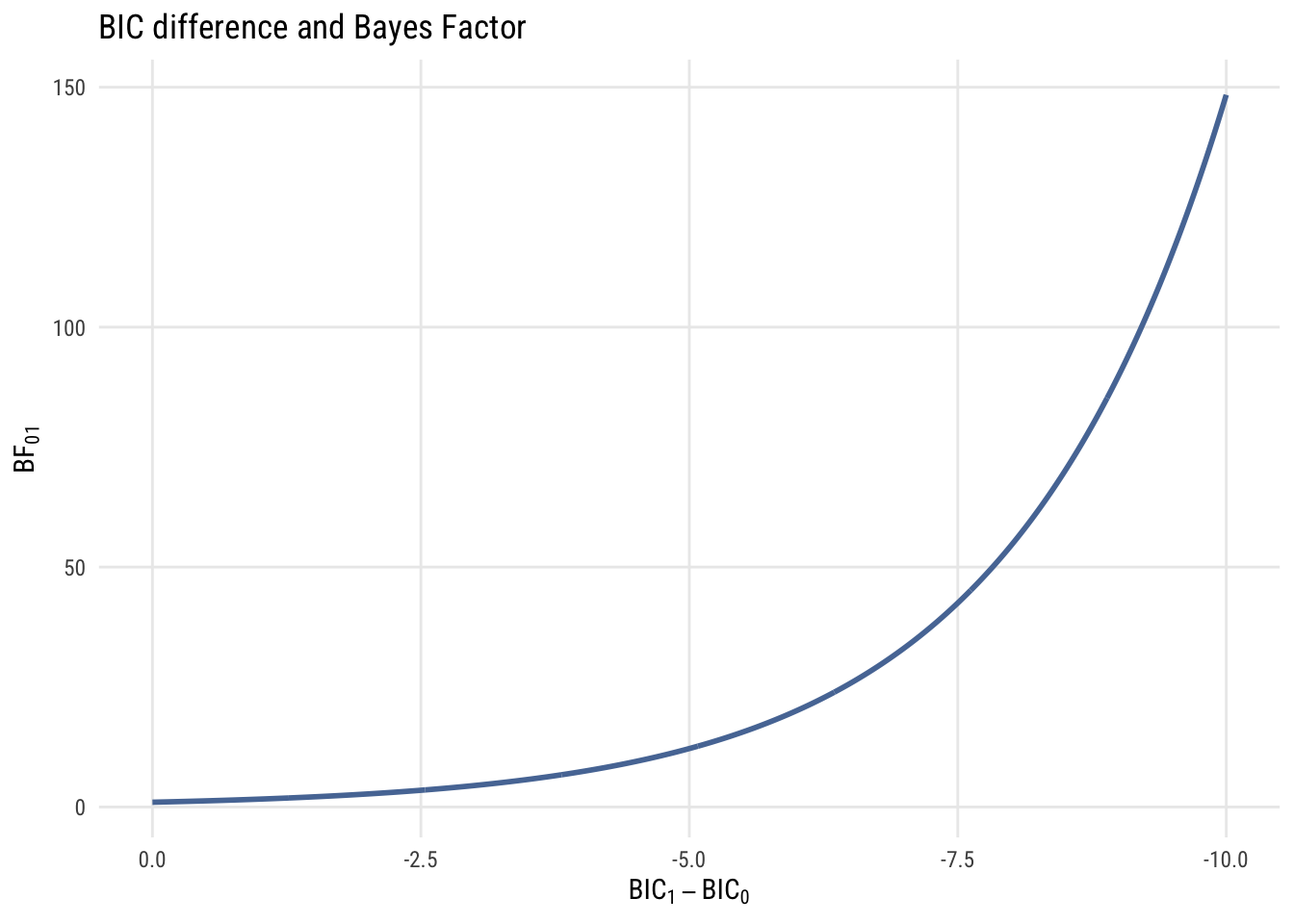

The different penalty term comes here because the BIC is set up to do a different job. The difference between two models’ BICs is a large-sample approximation to a Bayes’ Factor (BF). The BF is how much more likely one model is compared to another. So if, say, the BF of \(M_1\) vs. \(M_0\) is 4, that means that \(M_1\) is four times more likely to be the true model. If those are the only two models we are considering, then \(M_1\) has an 80% chance of being the true model.

We can convert the difference between two BICs into a BF2 as follows:

2 This formula (and this whole section) assumes we have no prior preference for either model before looking at the data. That’s not something we’re going to discuss further here. But go read the paper!

\[ \text{BF}_{10} = \exp \left( -\frac{1}{2} \Delta \text{BIC} \right) \]

Just to build your intution, here’s a graph showing the relationship between BIC difference and BF.

Data prep

delta_bic <- seq(-10, 0, .01)

bf <- exp(-1/2 * delta_bic)Show code

plt(bf ~ delta_bic,

type = "l",

lw = 2,

xlim = c(0, -10),

main = "BIC difference and Bayes Factor",

ylab = expression("BF"["01"]),

xlab = expression("BIC"[1] - "BIC"[0]))

Show code

ggplot(data.frame(delta_bic = delta_bic, bf = bf), aes(x = delta_bic, y = bf)) +

geom_line(linewidth = 1, color = tableau10[1]) +

scale_x_reverse(limits = c(0, -10)) +

labs(

title = "BIC difference and Bayes Factor",

y = expression("BF"["01"]),

x = expression("BIC"[1] - "BIC"[0]))

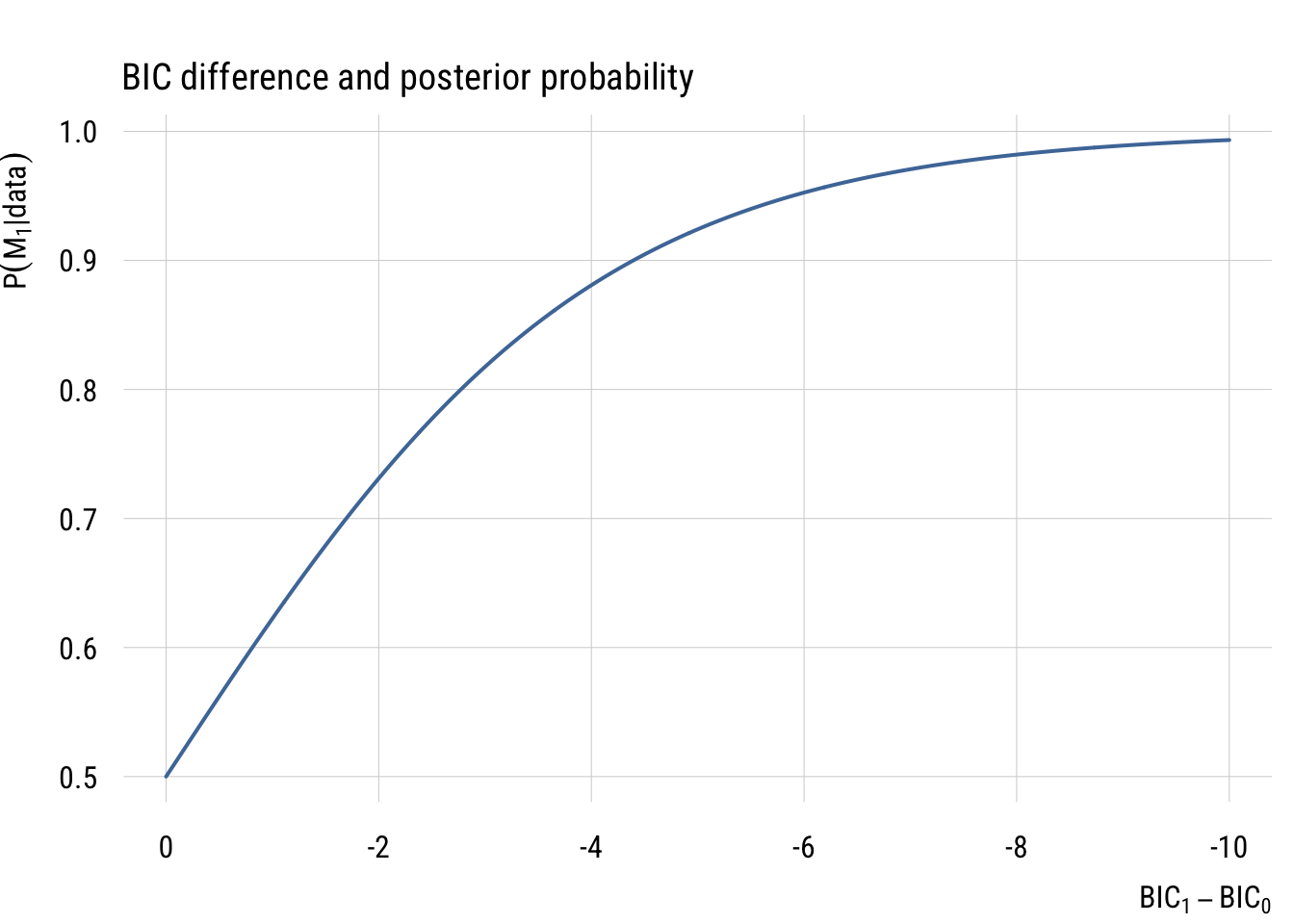

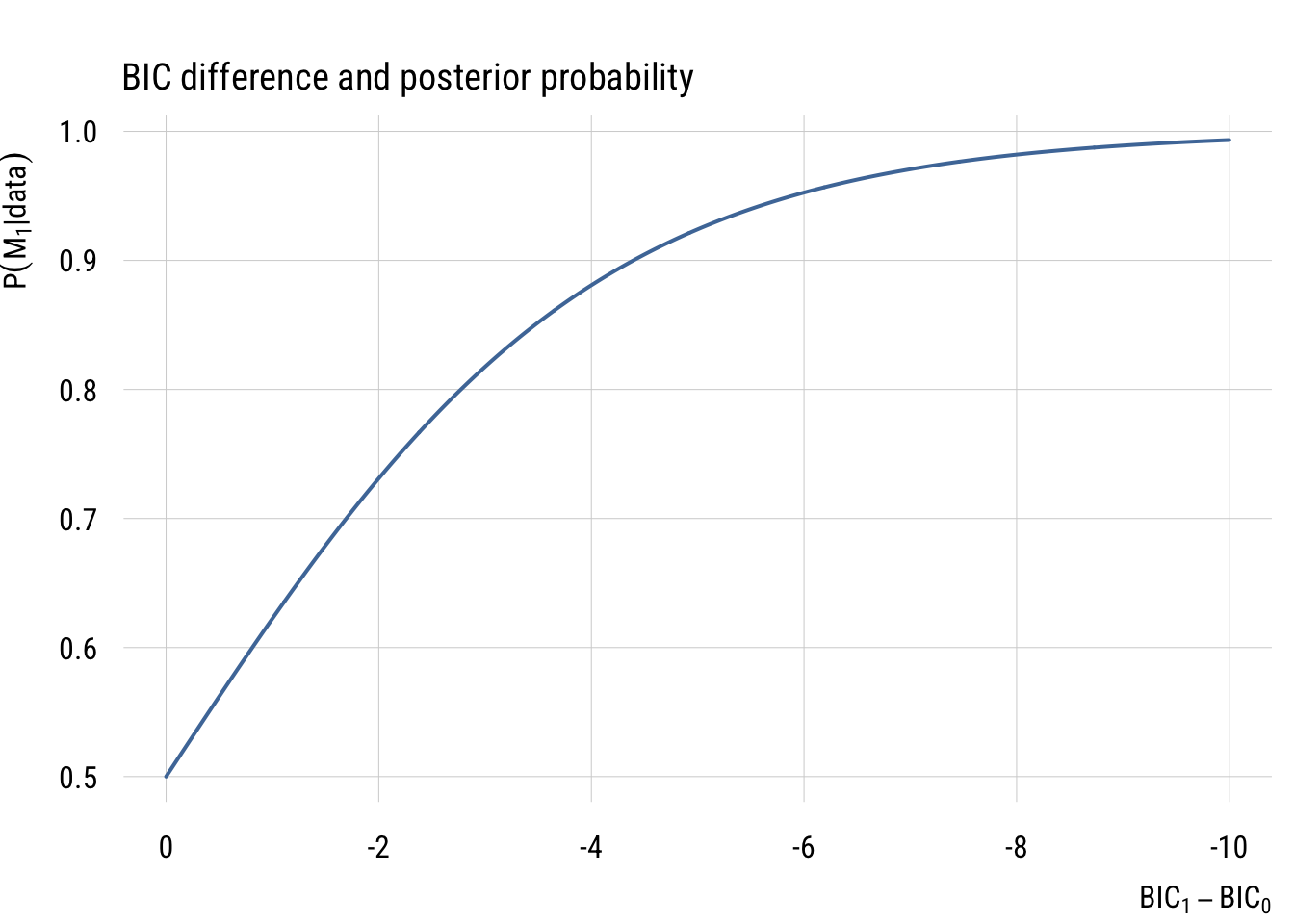

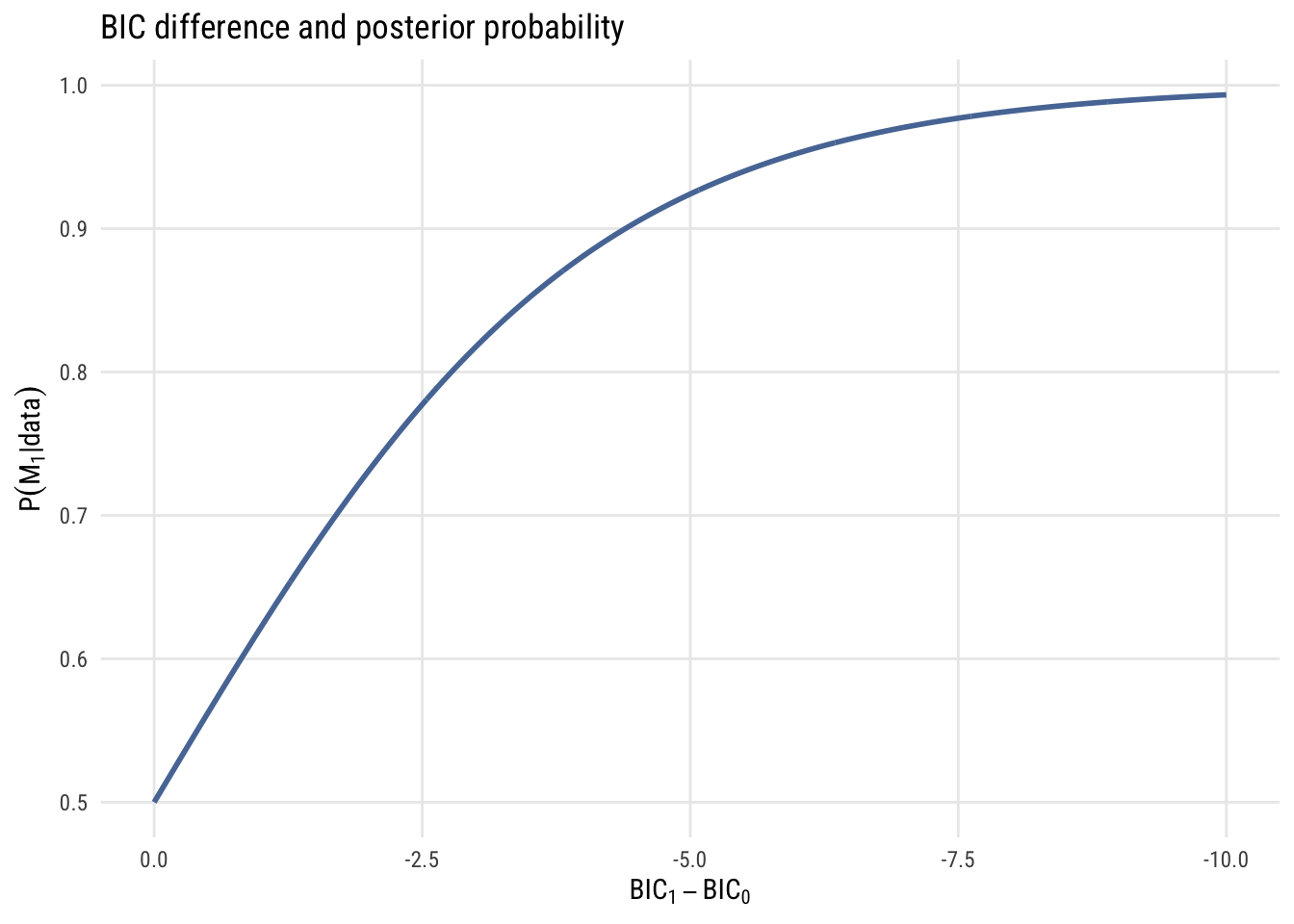

And if we’re only considering two models, we can convert Bayes’ factors into posterior probabilities as follows:

\[ P(M_1 | \text{data}) = \frac{\text{BF}_{01}}{1 + \text{BF}_{01}} \]

The graph below shows how BIC differences become posterior model probabilities.

Data prep

pp <- bf / (1 + bf)Show code

plt(pp ~ delta_bic,

type = "l",

lw = 2,

xlim = c(0, -10),

main = "BIC difference and posterior probability",

ylab = expression(P(M[1]* "|" * data)),

xlab = expression("BIC"[1] - "BIC"[0]))

Show code

ggplot(data.frame(delta_bic = delta_bic, pp = pp), aes(x = delta_bic, y = pp)) +

geom_line(linewidth = 1, color = tableau10[1]) +

scale_x_reverse(limits = c(0, -10)) +

labs(

title = "BIC difference and posterior probability",

y = expression(P(M[1]* "|" * data)),

x = expression("BIC"[1] - "BIC"[0]))

Based on these graphs, you can see that BIC differences of 10 or more are very strong evidence that one model is a much better fit than the other3.

3 Of course, you need to make sure than any models you compare are reasonable candidates. For example, models where the future predicts the past might be a great fit to the data, but they should never be estimated in the first place! So things like the AIC and BIC don’t in any way exempt you from having to think scientifically.

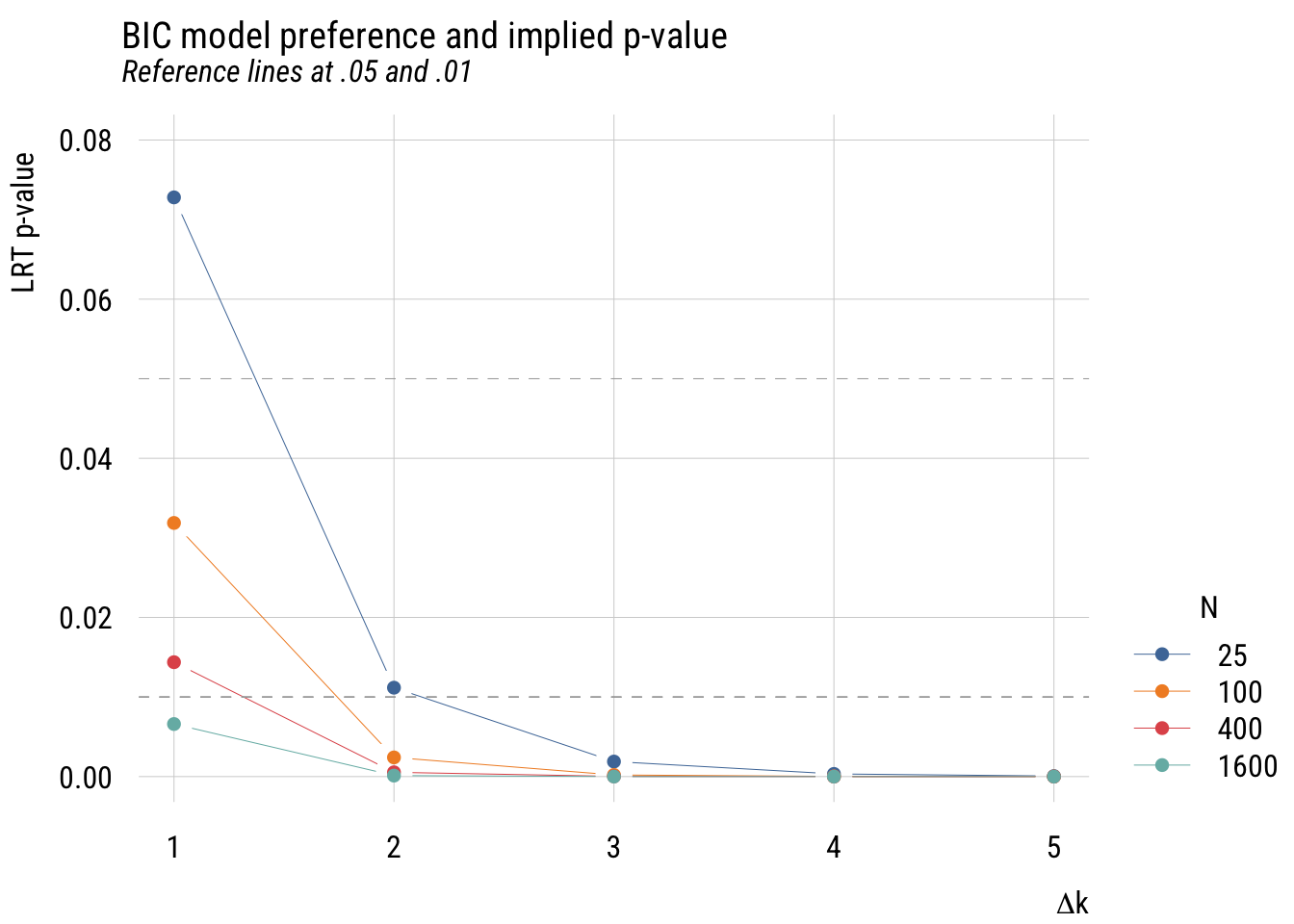

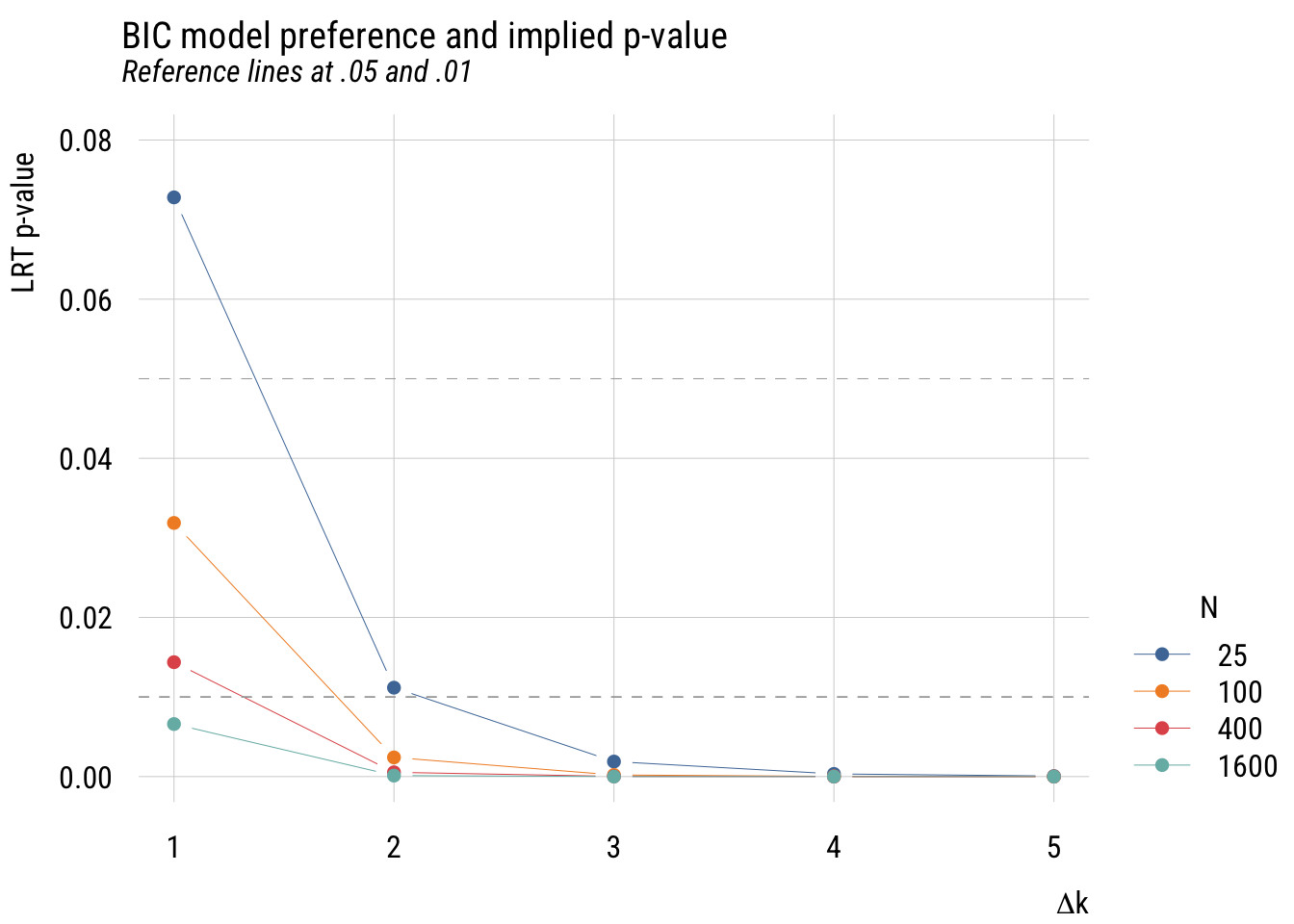

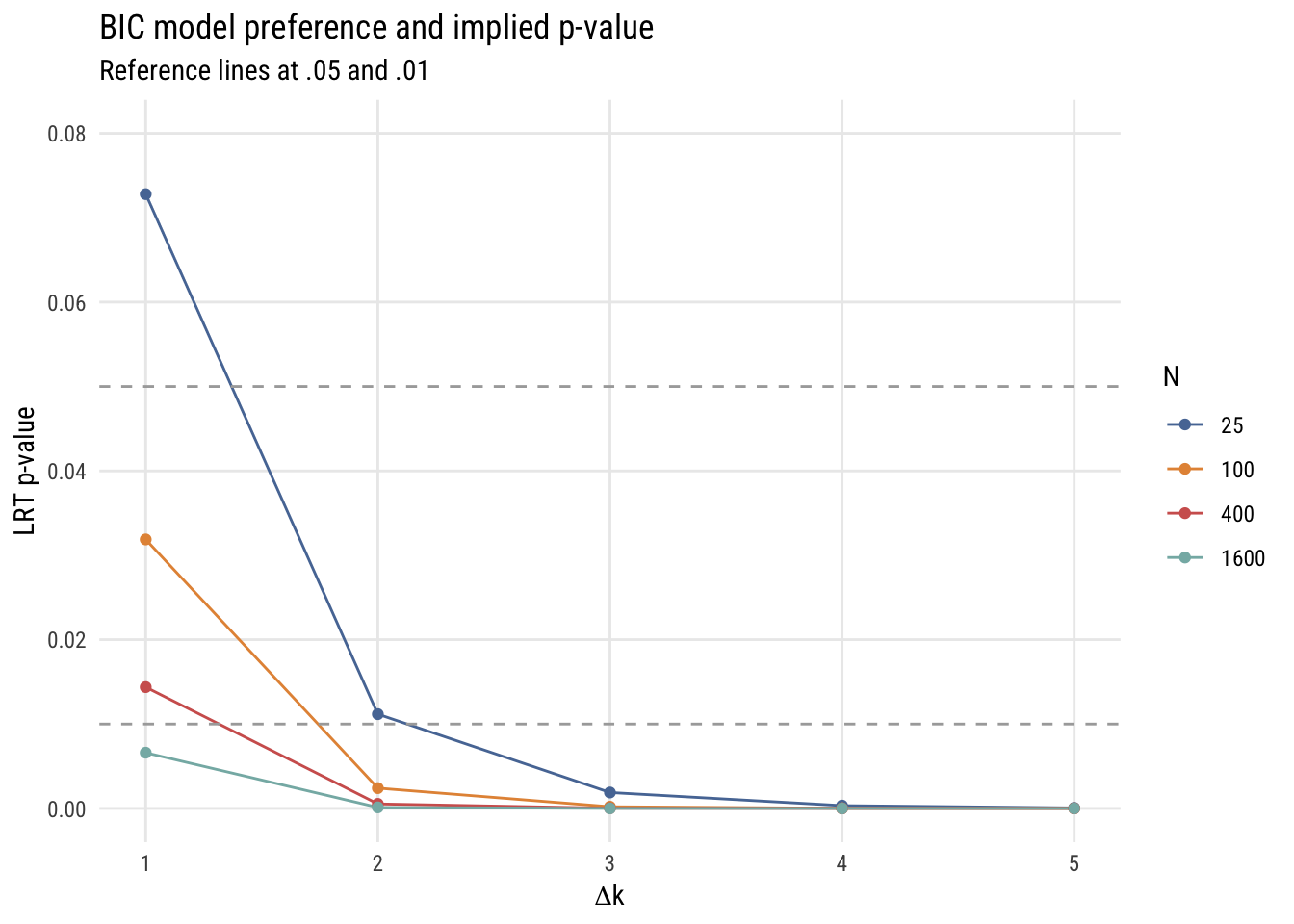

Implied p-values

As I discussed above, we don’t use AIC and BIC to control Type I error like we do for null hypothesis tests. Nevertheless, here are the p-values implied by the “take the lower” BIC decision rule.

Data prep

bic_to_p <- expand_grid(

delta_k = 1:5,

n = c(25, 100, 400, 1600)) |>

rowwise() |>

mutate(p = 2 * (1 - pnorm(sqrt(log(n) * delta_k))),

logp = log10(p))Show code

plt(p ~ delta_k | factor(n),

data = bic_to_p,

type = "b",

main = "BIC model preference and implied p-value",

sub = "Reference lines at .05 and .01",

ylab = "LRT p-value",

ylim = c(0, .08),

xlab = expression(Delta * "k"),

legend = list("bottomright!", title = "N"))

plt_add(type = type_hline(h = .05), lty = 2, col = "darkgray")

plt_add(type = type_hline(h = .01), lty = 2, col = "darkgray")

Show code

ggplot(bic_to_p, aes(x = delta_k, y = p, color = factor(n))) +

geom_line() +

geom_point() +

geom_hline(yintercept = .05, linetype = "dashed", color = "darkgray") +

geom_hline(yintercept = .01, linetype = "dashed", color = "darkgray") +

coord_cartesian(ylim = c(0, .08)) +

labs(

title = "BIC model preference and implied p-value",

subtitle = "Reference lines at .05 and .01",

y = "LRT p-value",

x = expression(Delta * "k"),

color = "N")

This is more complicated than the AIC graph because the results depend on the sample size. But in any case, you can see that the implied p-values are much stricter.

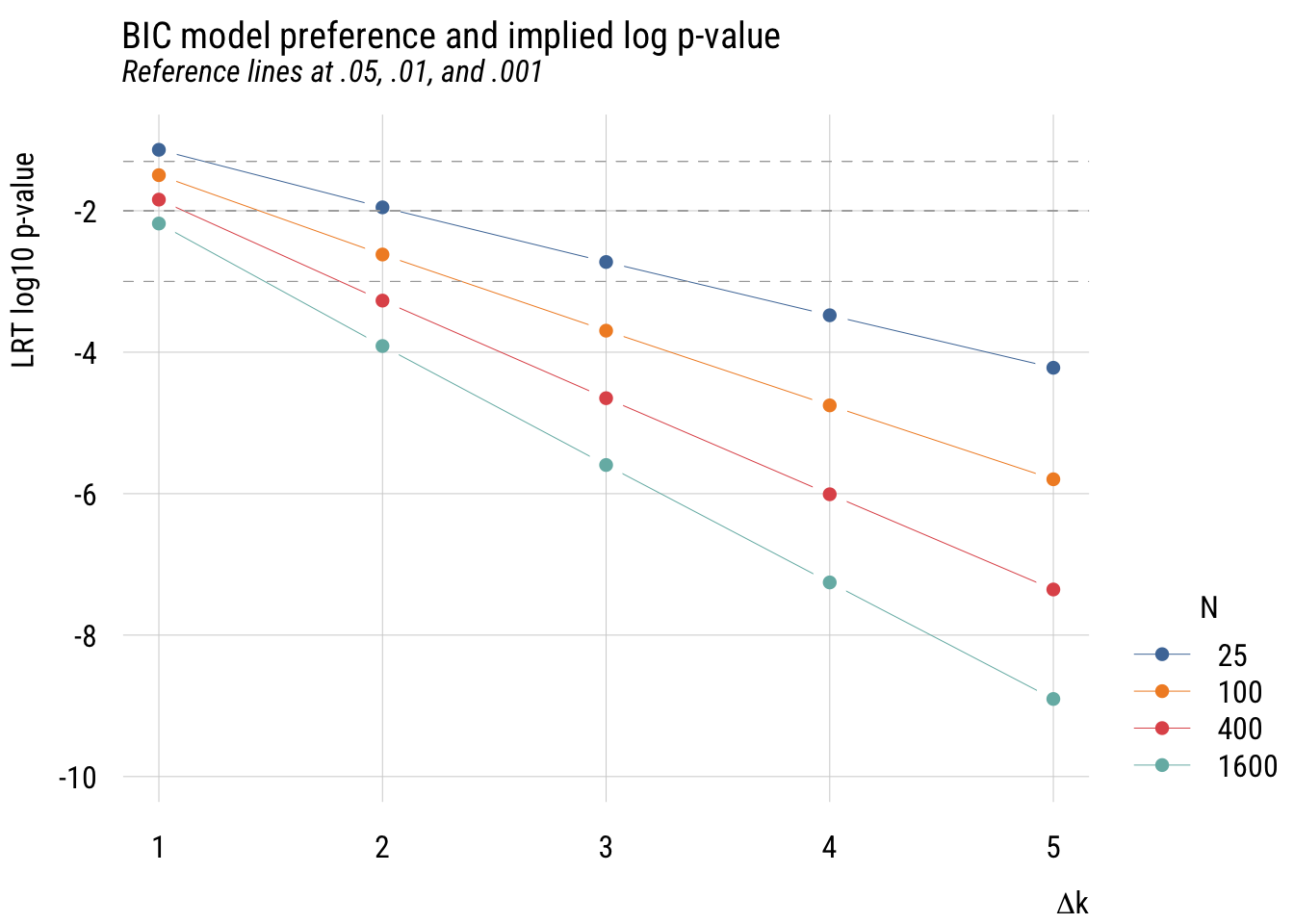

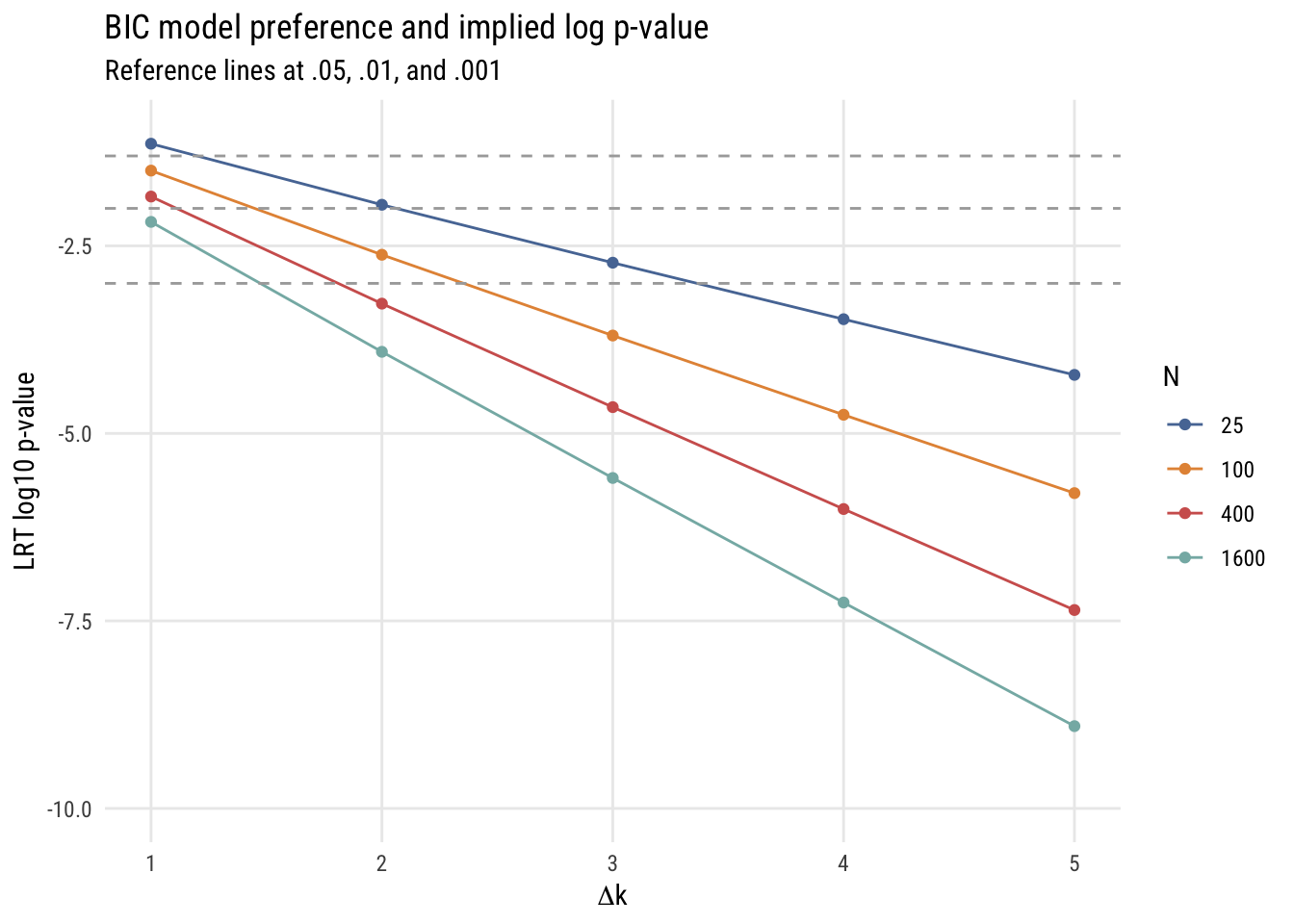

We can make this a little easier to see by looking at the same information with base-10 log p-value on the y-axis.

Show code

plt(logp ~ delta_k | factor(n),

data = bic_to_p,

type = "b",

main = "BIC model preference and implied log p-value",

sub = "Reference lines at .05, .01, and .001",

ylab = "LRT log10 p-value",

xlab = expression(Delta * "k"),

ylim = c(-10, -1),

legend = list("bottomright!", title = "N"))

plt_add(type = type_hline(h = log10(.05)), lty = 2, col = "darkgray")

plt_add(type = type_hline(h = log10(.01)), lty = 2, col = "darkgray")

plt_add(type = type_hline(h = log10(.001)), lty = 2, col = "darkgray")

Show code

ggplot(bic_to_p, aes(x = delta_k, y = logp, color = factor(n))) +

geom_line() +

geom_point() +

geom_hline(yintercept = log10(.05), linetype = "dashed", color = "darkgray") +

geom_hline(yintercept = log10(.01), linetype = "dashed", color = "darkgray") +

geom_hline(yintercept = log10(.001), linetype = "dashed", color = "darkgray") +

coord_cartesian(ylim = c(-10, -1)) +

labs(

title = "BIC model preference and implied log p-value",

subtitle = "Reference lines at .05, .01, and .001",

y = "LRT log10 p-value",

x = expression(Delta * "k"),

color = "N")

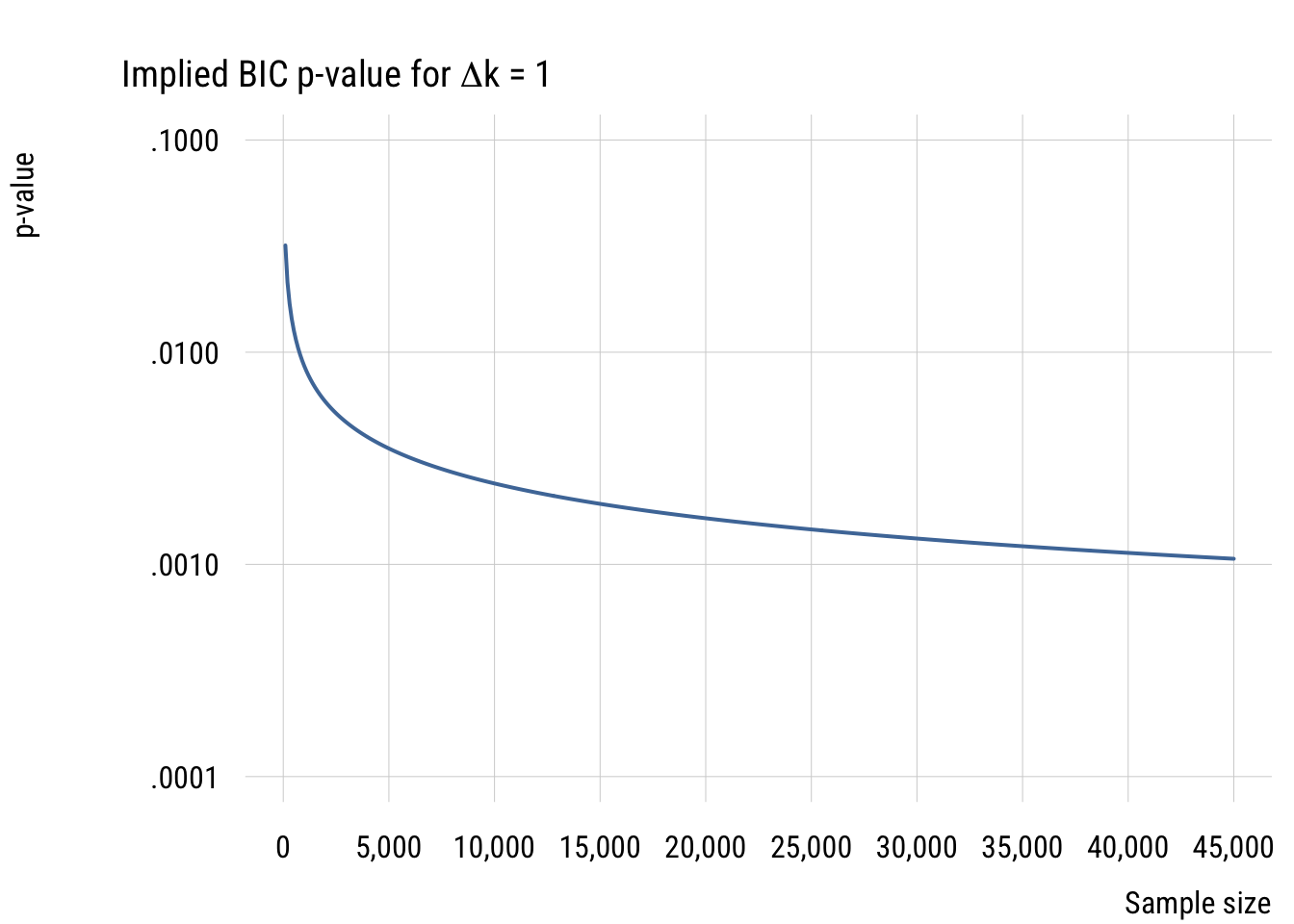

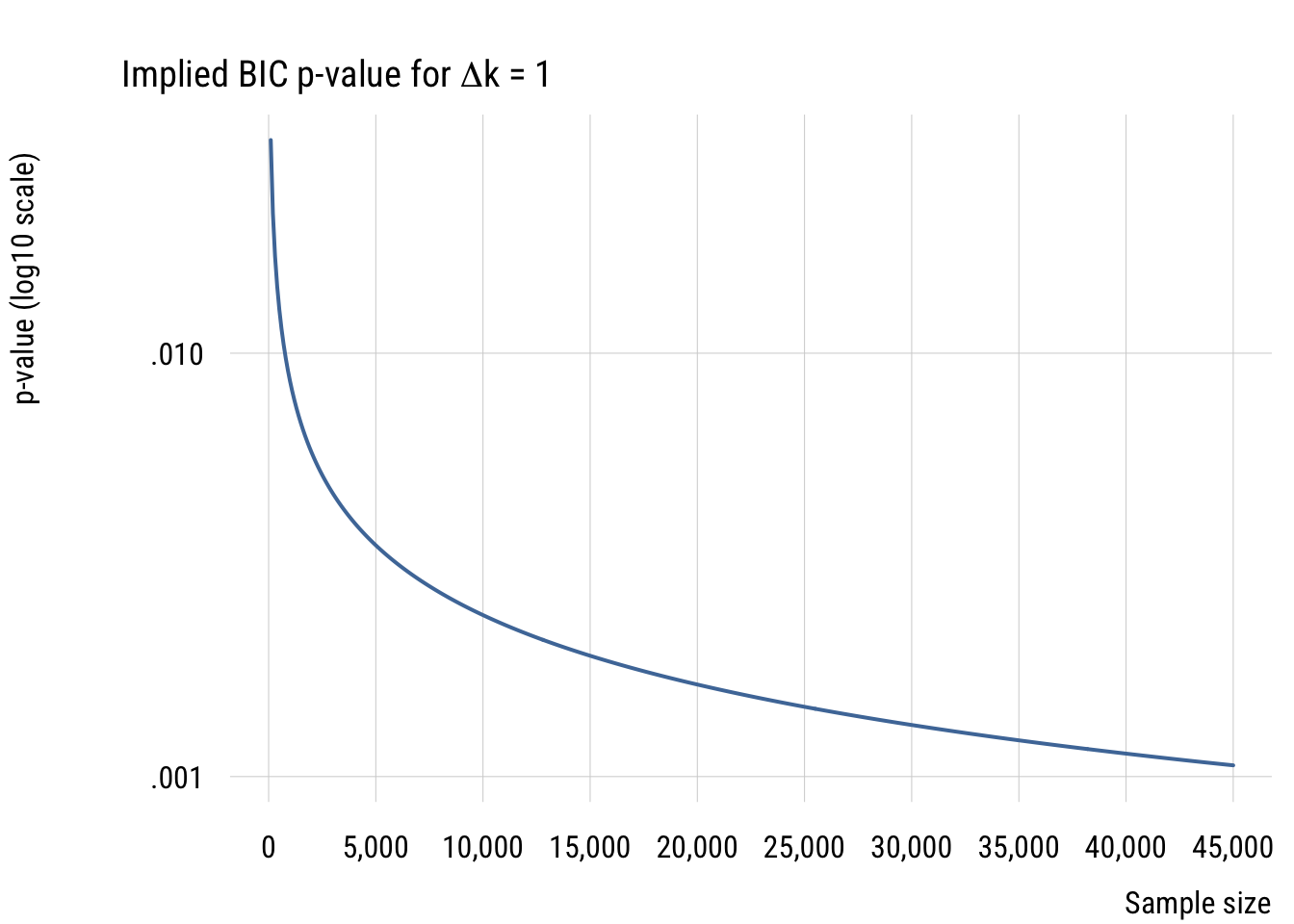

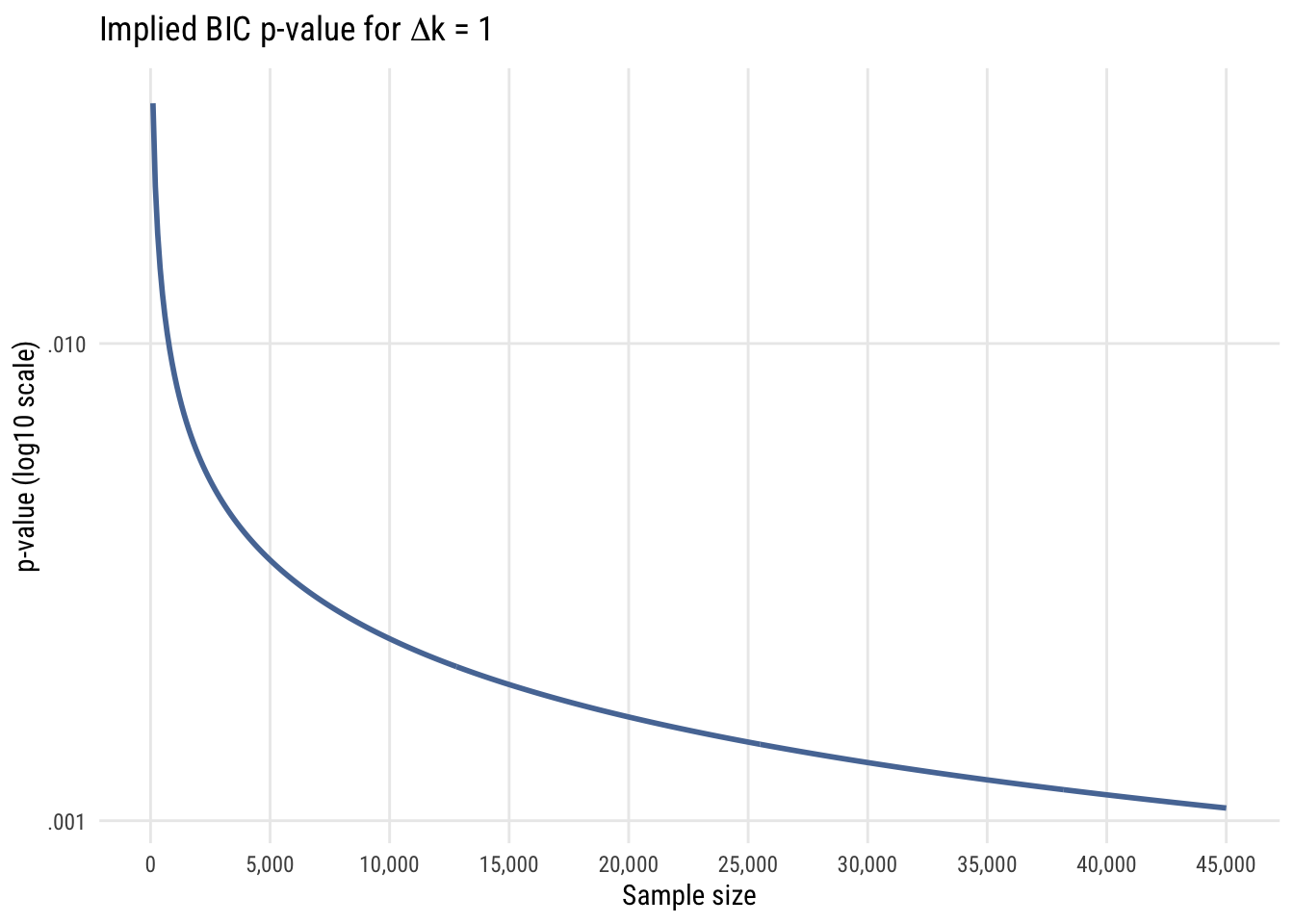

Finally, since right now we’re focused primarily on comparing models with only one parameter difference, let’s look at the implied p-value difference for such comparisons with different sample sizes.

Data prep

bic_to_p <- tibble(

n = seq(100, 45000, 100)) |>

rowwise() |>

mutate(p = 2 * (1 - pnorm(sqrt(log(n) * 1))),

logp = log10(p))Show code

plt(logp ~ n,

data = bic_to_p,

type = "l",

lw = 2,

main = expression("Implied BIC p-value for" ~ Delta * "k = 1"),

ylab = "p-value (log10 scale)",

xlab = "Sample size",

xaxl = "comma",

xaxb = seq(0, 45000, 5000),

yaxb = c(-3, -2),

yaxl = function(z) {

sub("^0\\.", ".", format(10^z, scientific = FALSE, trim = TRUE))

})

Show code

ggplot(bic_to_p, aes(x = n, y = logp)) +

geom_line(linewidth = 1, color = tableau10[1]) +

scale_x_continuous(

labels = scales::comma,

breaks = seq(0, 45000, 5000)) +

scale_y_continuous(

breaks = c(-4, -3, -2, -1),

labels = function(z) sub("^0\\.", ".", format(10^z, scientific = FALSE, trim = TRUE))) +

labs(

title = expression("Implied BIC p-value for" ~ Delta * "k = 1"),

y = "p-value (log10 scale)",

x = "Sample size")

In all these cases, it’s easy to see that using the BIC as a model selection decision rule is functionally equivalent to choosing a much smaller \(\alpha\) level than is conventional in social and behavioral science. For that reason, I think it’s a good choice for self-discipline.

Example use

In R, getting the BIC is just as easy as getting the AIC.

m0 <- lm(life_exp ~ 1, data = states)

m1 <- lm(life_exp ~ 1 + hs_grad, data = states)

BIC(m0, m1) df BIC

m0 2 178.1532

m1 3 161.3670In this case it’s very clear that if we’re choosing between these models as “true model” candidates, the model that conditions on hs_grad is preferred.

Concluding thoughts

AIC and BIC are useful ways to think about model selection without having to commit either to full null-hypothesis significance testing (which has some issues, especially when you think about it) or to full Bayesian statistics (which is pretty complex for beginners).

These approaches have another advantage that we might not quite be ready to appreciate, which is that we can use them to compare non-nested models. All the models we’ve seen so far have been nested, which means the simpler model is a subset of the more complex model. You can only use \(F\) tests and LRTs to compare nested models. We will revisit this issue later.